Your eyes tell you are interested

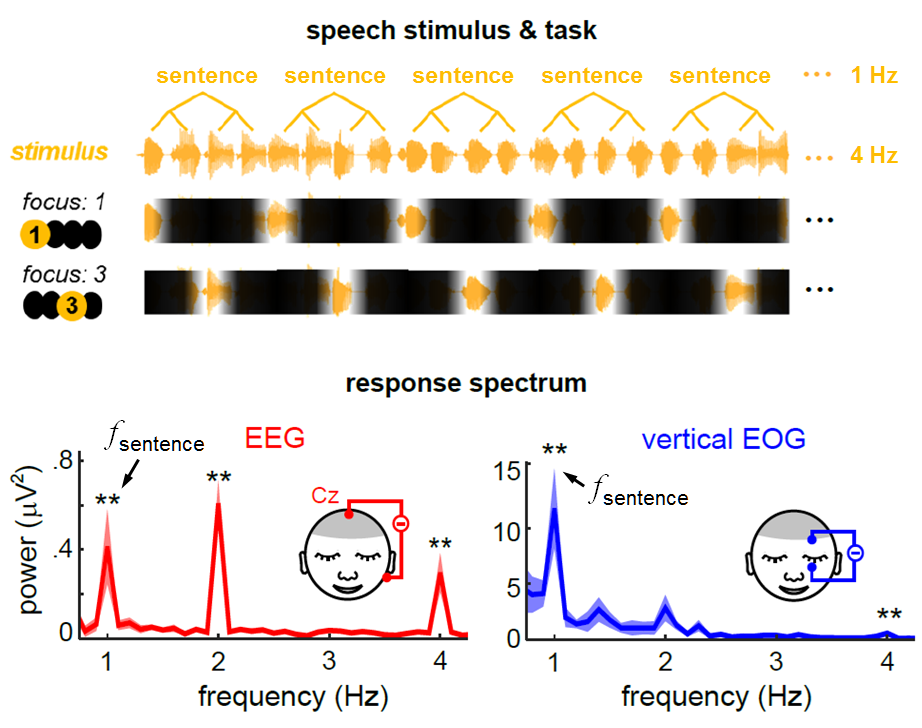

The research team headed by DING Nai at the College of Biomedical Engineering and Instrument Sciences published an article entitled “Eye Activity Tracks Task-Relevant Structures during Speech and Auditory Sequence Perception” in the December 18 issue of Nature Communications. Their results show that your eye movements may tell if you are interested when listening to a speech.

In daily life, a lot of things call for our attention. For example, we need to listen attentively in class, stay focused behind the wheel and stay alert to the movement of the opponent in athletic sports. Therefore, an elite teacher is capable of developing a comfortable rhythm of teaching, sometimes fast and sometimes slow. In this kind of ambience, students are able to stay focused in class. By the same token, an effective strategy for information processing involves processing the most important information in the optimal attention state. Then how can the brain achieve a real-time regulation of attention states?

Using vertical electrooculogram (EOG),Professor Ding and his colleagues found eye muscle activities increase after a sound sequence, even when the eyes are closed. More importantly, the phase of sentence-tracking ocular activity is strongly modulated by temporal attention, i.e., which word in a sentence is attended. These structures-tracking eye movement reflects cortical motor activation and it may be a general mechanism to allocate temporal attention during sequence processing.

Their results propose a new perspective on how the brain can swap attention states at an expeditious pace. Additionally, it also opens up a novel approach to monitoring cortical attention states via a computer-based visual device.